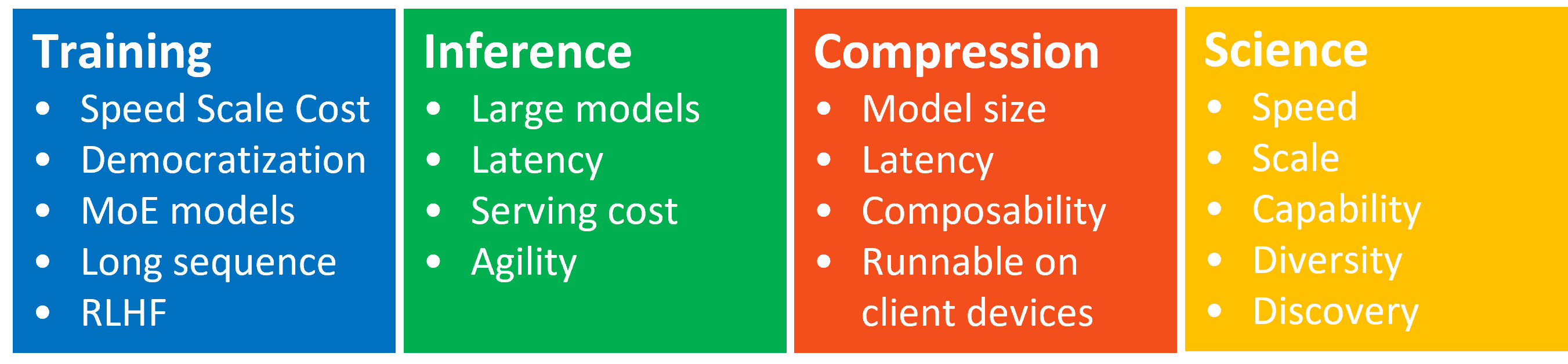

DeepSpeed Compression: A composable library for extreme compression and zero-cost quantization - Microsoft Research

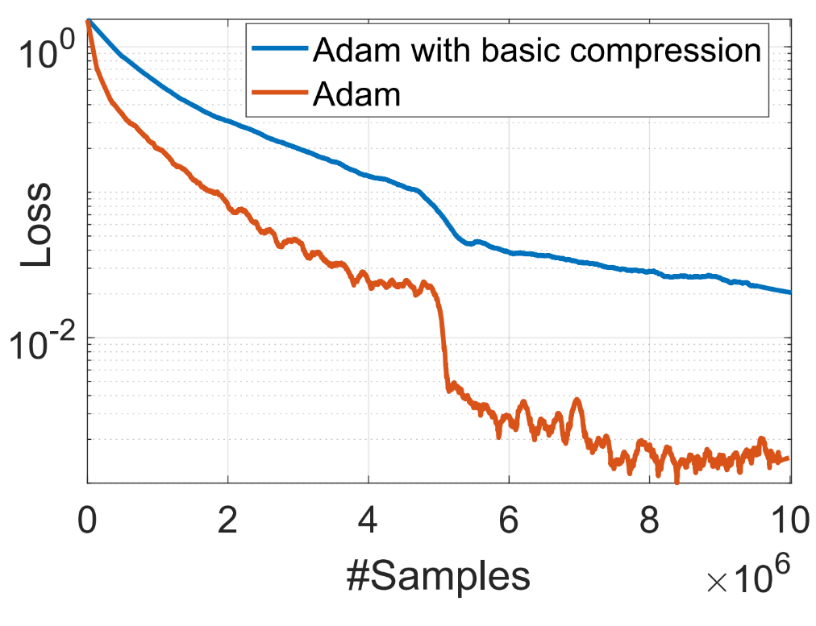

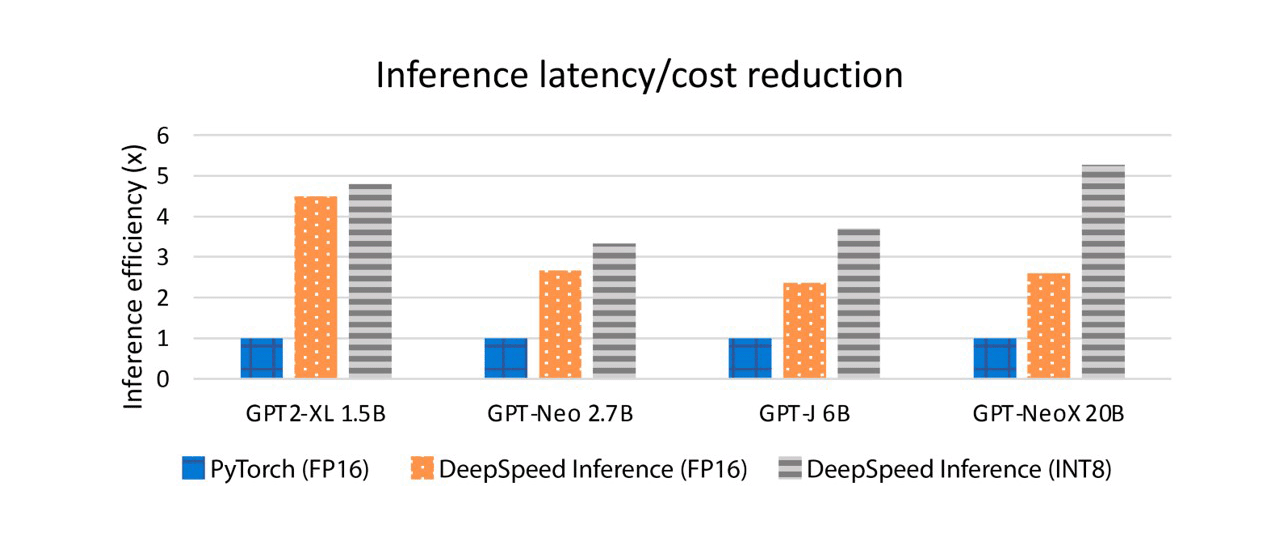

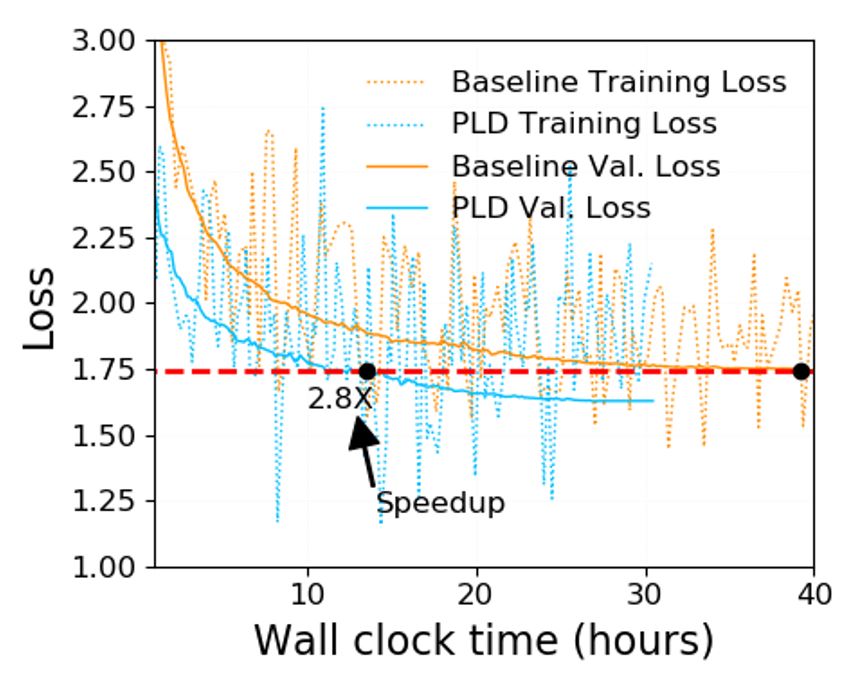

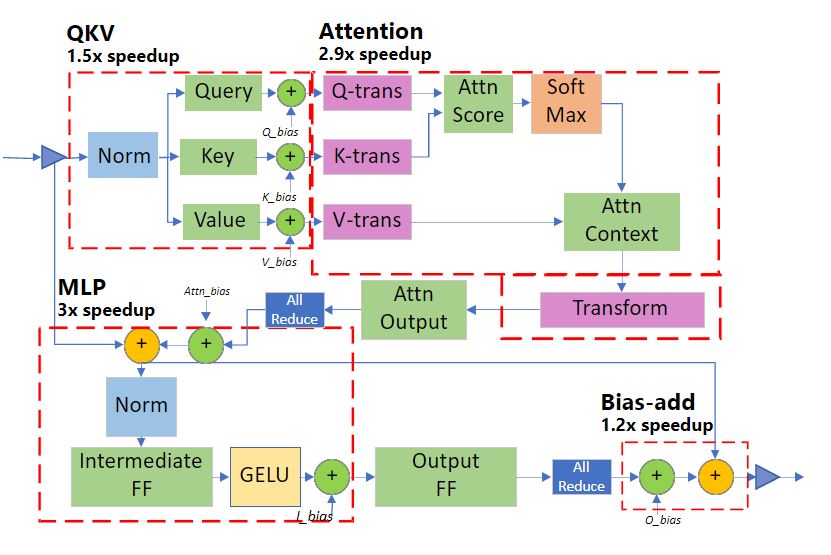

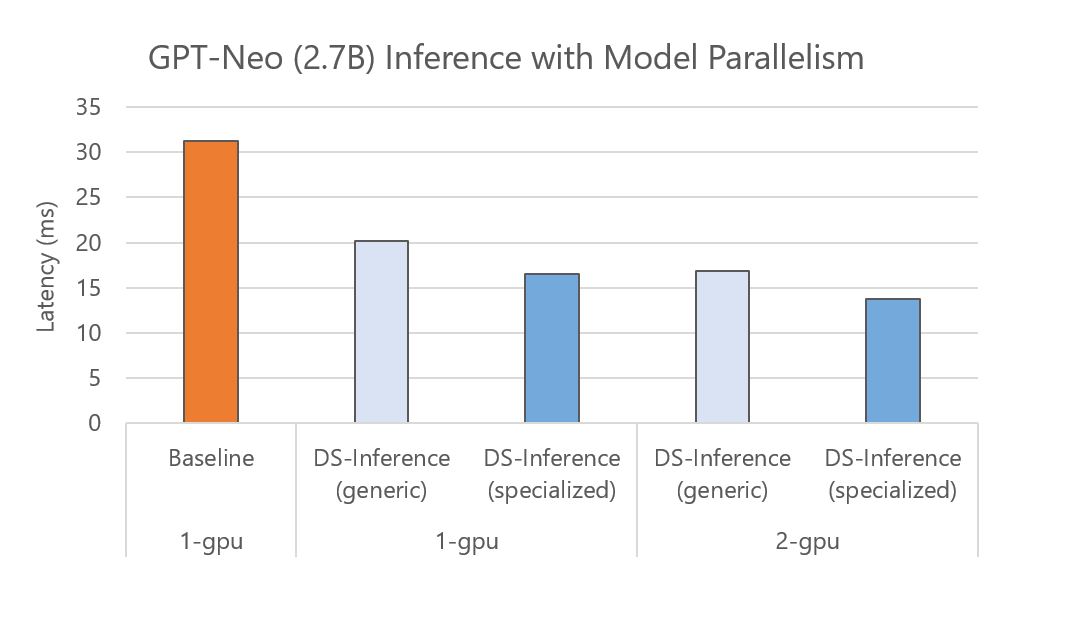

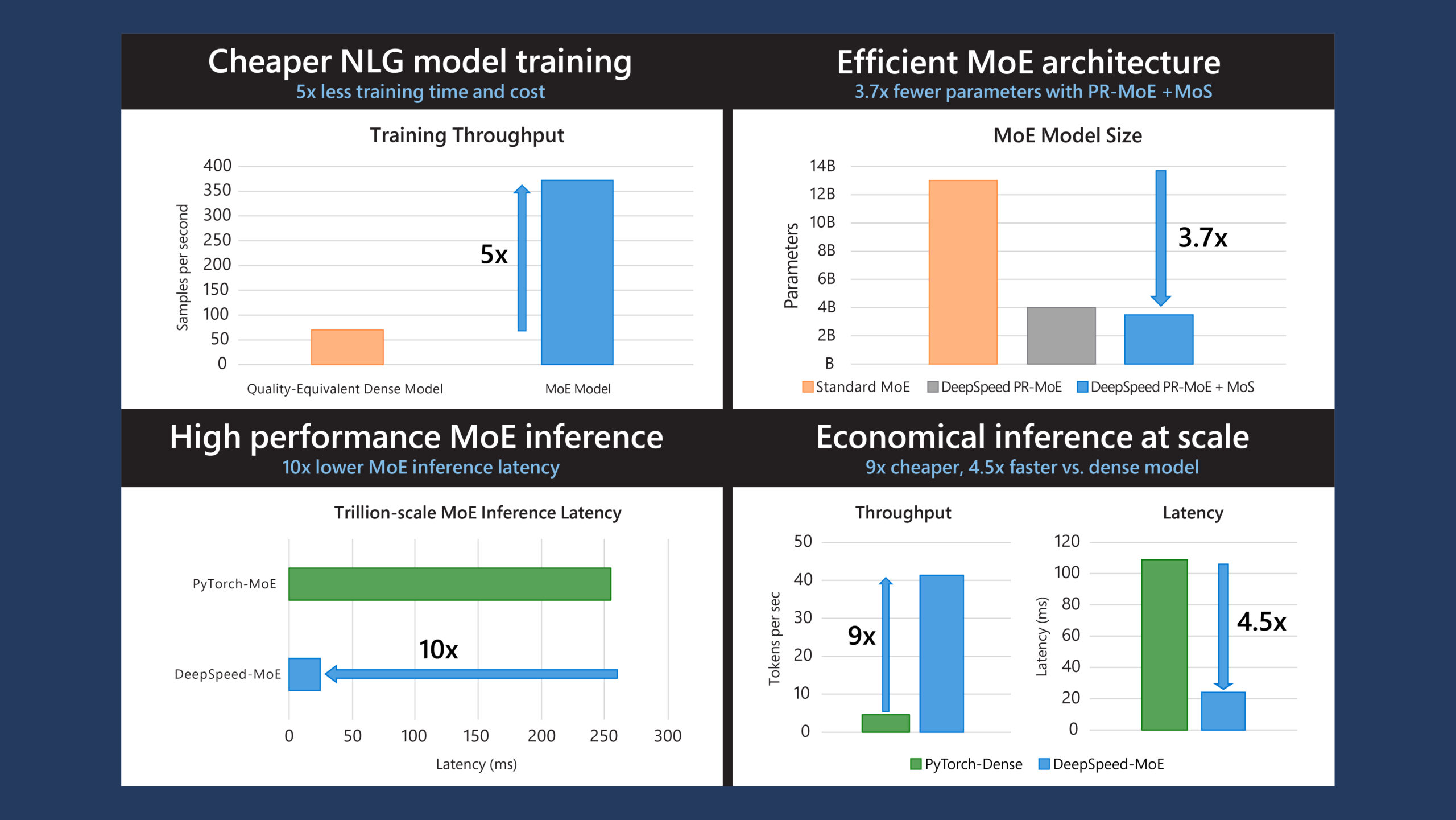

DeepSpeed: Accelerating large-scale model inference and training via system optimizations and compression - Microsoft Research

DeepSpeed: Accelerating large-scale model inference and training via system optimizations and compression - Microsoft Research

DeepSpeed: Accelerating large-scale model inference and training via system optimizations and compression - Microsoft Research

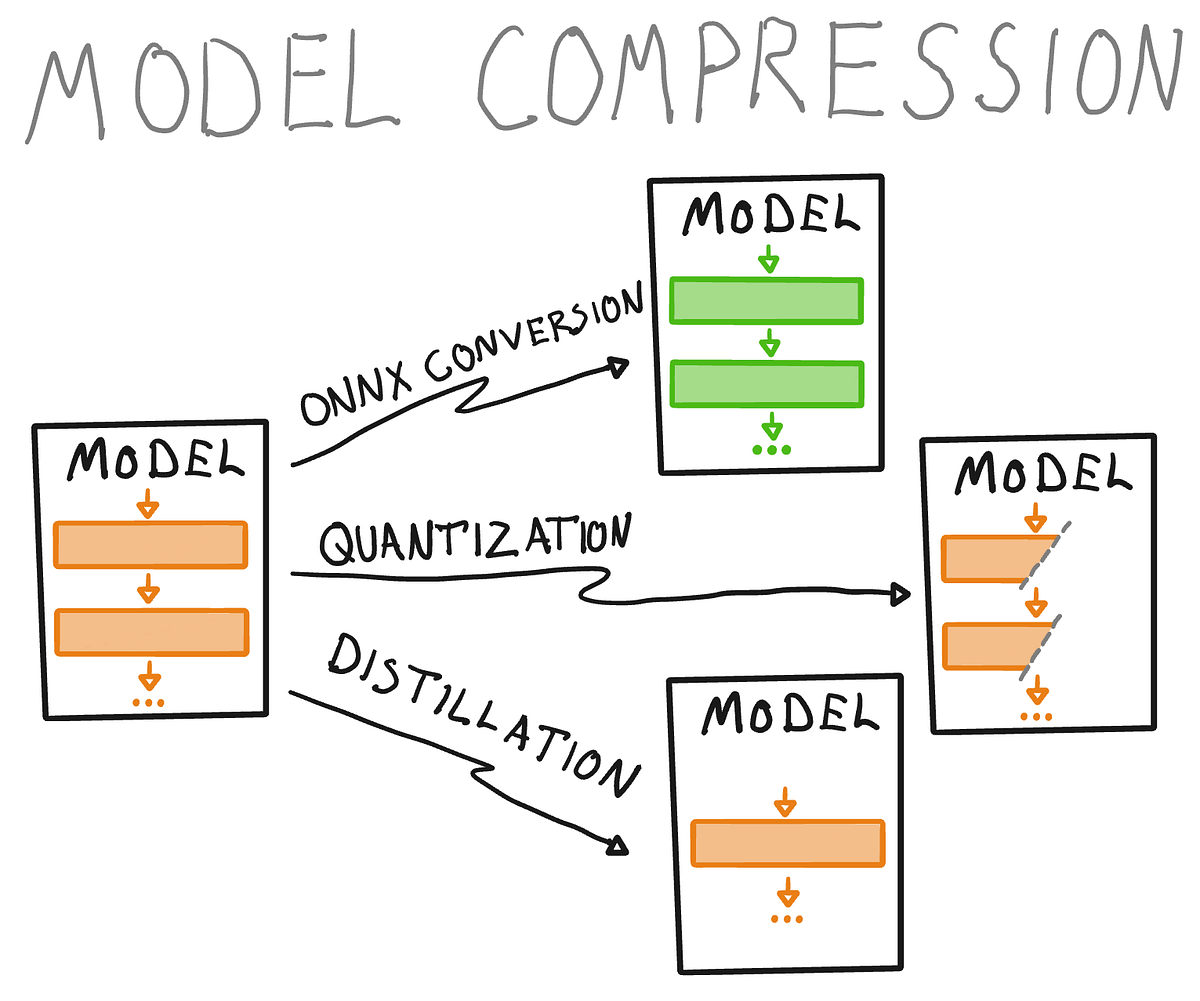

Model compression and optimization: Why think bigger when you can think smaller? | by David Williams | Data Science at Microsoft | Medium

Introduction to scaling Large Model training and inference using DeepSpeed | by mithil shah | Medium

Microsoft's Open Sourced a New Library for Extreme Compression of Deep Learning Models | by Jesus Rodriguez | Medium

![REQUEST] Add more device-agnostic compression algorithms · Issue #2894 · microsoft/DeepSpeed · GitHub REQUEST] Add more device-agnostic compression algorithms · Issue #2894 · microsoft/DeepSpeed · GitHub](https://user-images.githubusercontent.com/16394660/221105239-87edd628-30d6-4ab3-a3e9-6a013993a25d.png)

REQUEST] Add more device-agnostic compression algorithms · Issue #2894 · microsoft/DeepSpeed · GitHub

DeepSpeed: Accelerating large-scale model inference and training via system optimizations and compression - Microsoft Research

GitHub - microsoft/DeepSpeed: DeepSpeed is a deep learning optimization library that makes distributed training and inference easy, efficient, and effective.

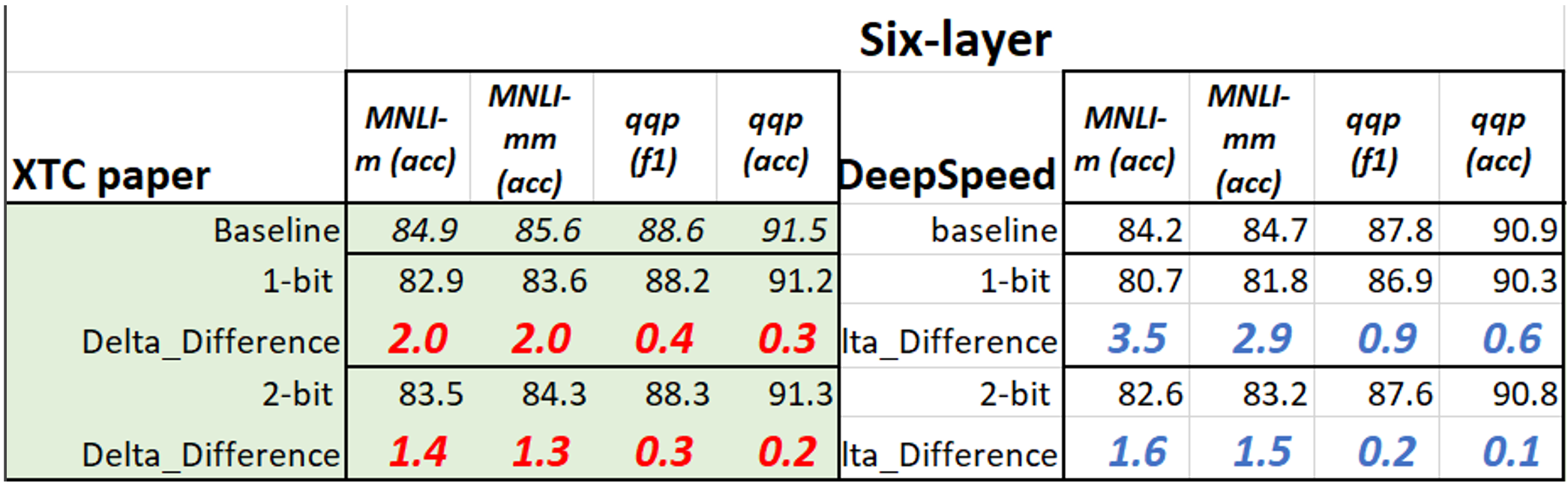

DeepSpeed Compression: A composable library for extreme compression and zero-cost quantization - Microsoft Research

DeepSpeed Compression: A composable library for extreme compression and zero-cost quantization - Microsoft Research

DeepSpeed: Accelerating large-scale model inference and training via system optimizations and compression - Microsoft Research

GitHub - microsoft/DeepSpeed: DeepSpeed is a deep learning optimization library that makes distributed training and inference easy, efficient, and effective.

DeepSpeed: Accelerating large-scale model inference and training via system optimizations and compression - Microsoft Research

DeepSpeed Compression: A composable library for extreme compression and zero-cost quantization - Microsoft Research